Network management protocols and the networks they govern are rewriting each other. The rise of AI-scale infrastructure, hyperscale data centers, and disaggregated networking has exposed the seams in decades-old protocols, demanding a leap from periodic polls and fragile CLI scripts to millisecond-resolution streaming telemetry and transactional, model-driven configuration.

It is a compounding feedback loop: the demands of AI workloads are pushing networks to become faster, more programmable, and fully observable; and in return, next-generation protocols are delivering the structured, real-time intelligence that makes operating those networks at scale tractable.

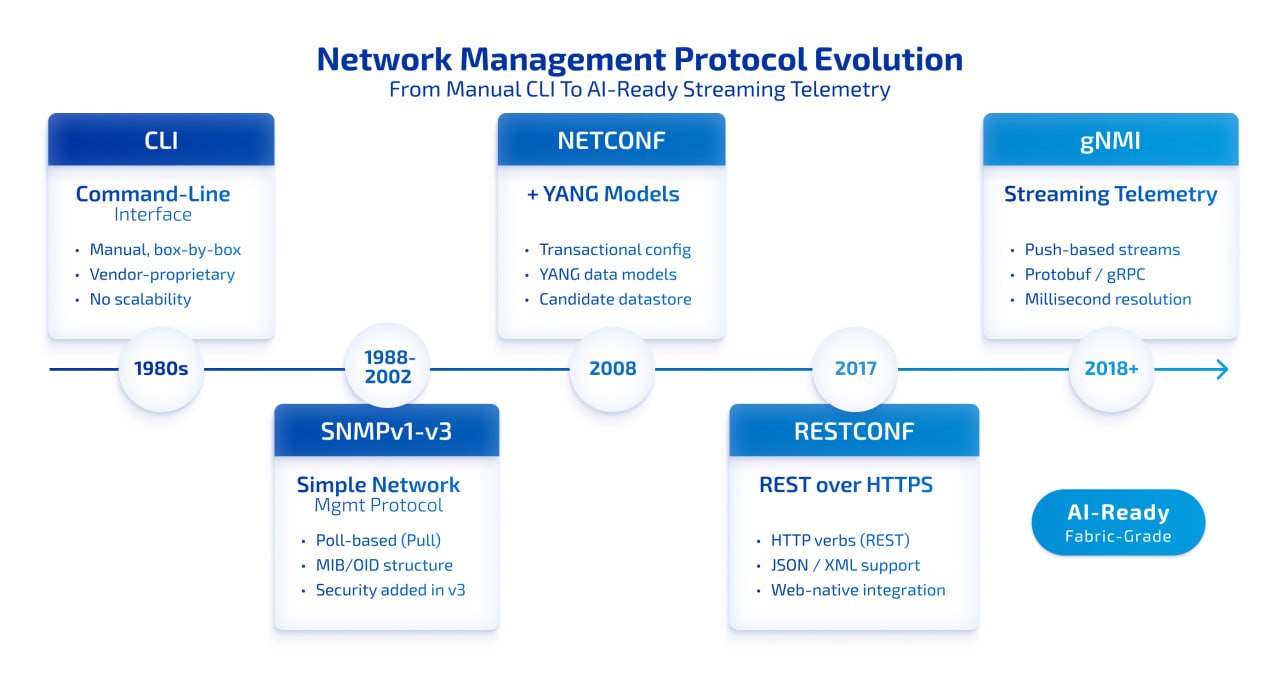

In this article, we trace the full arc of network management protocol evolution – from the imperative era of the CLI through the SNMP epoch, into the programmability revolution, and finally to the modern observability powerhouse of gNMI and streaming telemetry. Along the way, we examine how open networking and SONiC-based platforms are tying it all together – and what that means for teams building infrastructure for the AI era.

The Starting Point: Managing Networks Box by Box

In the earliest days of internetworking, there was no abstraction – only craft. Network engineers worked device by device, typing commands into vendor-specific CLIs that demanded deep knowledge of proprietary syntax and operational quirks. A configuration change on one router meant logging in, running commands, and hoping the rest of the network held together while you worked.

This “box-by-box” mentality was the only game in town for a long time. And while the CLI remains an indispensable tool for deep diagnostics and out-of-band access during automation failures, its limitations became increasingly glaring as networks scaled. Digital transformation – cloud adoption, IoT, and now AI – made manual intervention inefficient and structurally untenable.

Key Shift: The network engineer’s role transformed from craftsman – typing commands – to architect, designing resilient automation systems and extracting network-wide insights from telemetry data.

This transformation was the primary catalyst for standardized network management protocols. The question was no longer whether to automate, but how to create protocols capable of managing vast, heterogeneous networks reliably.

The SNMP Epoch: Standardization, Scale, and Its Limits

By 1988, the internet community lacked a unified protocol for network management. Early efforts were either too simplistic or too demanding for the hardware of the era. An ad hoc committee chaired by Vint Cerf recommended the Simple Network Management Protocol (SNMP) as an interim solution – and that “interim” label turned out to be one of the great understatements in networking history.

SNMP became the foundational element of network management for decades. Its client-server architecture, where a Network Management System (NMS) queries software agents running on managed devices, was elegant in its simplicity. The Management Information Base (MIB) provided a hierarchical structure for organizing device data, with every object identified by a unique Object Identifier (OID).

Three Versions, Three Eras

SNMPv1 established the baseline: device monitoring for CPU, memory, and interface status, secured by little more than a plaintext community string. SNMPv2c brought the performance improvement of the GetBulk operator and 64-bit counters for high-speed interfaces, but still relied on insecure community-based authentication. SNMPv3, standardized in 2002, finally introduced a security framework with the User-Based Security Model for authentication and encryption, and the View-Based Access Control Model for access control.

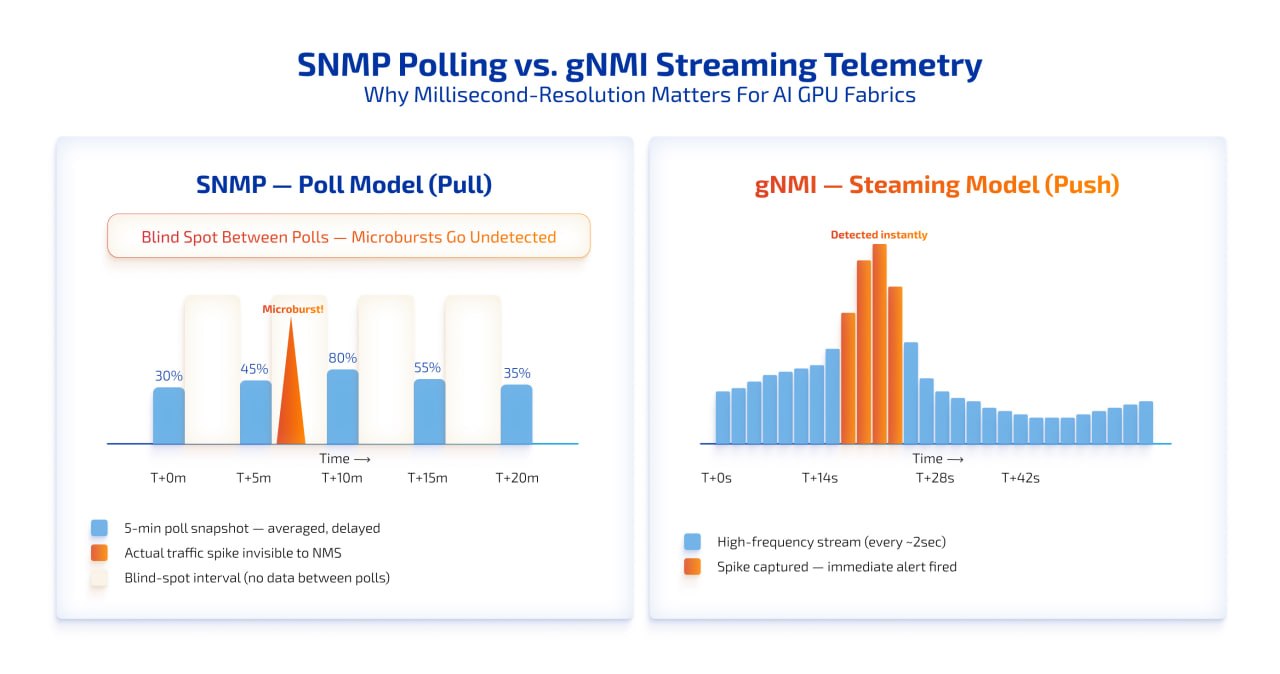

The Polling Tax and the Blind Spots

For all its longevity, SNMP carries structural constraints that the modern era cannot ignore. The protocol operates primarily on a “pull” model: the NMS must periodically query every device for updates. This creates a CPU burden on managed devices and, more critically, leaves dangerous visibility gaps. If a traffic spike and subsequent packet drops occur between 5-minute poll cycles, the NMS simply averages them away. Microbursts – the transient events that cause the most damage in AI GPU fabrics – become invisible.

Configuration management fares no better. SNMP Set operations have no transactional integrity. There is no native way to validate a multi-step change or roll it back if one step fails. In an era where a misconfiguration can cascade across a fabric in milliseconds, this is an unacceptable risk.

The SNMP Verdict: A resilient workhorse for baseline monitoring of legacy devices, printers, and UPS systems – but architecturally incapable of meeting the resolution and transactional demands of modern, AI-scale network operations.

Considering a switch to open-source networking?

Explore the reasons to choose an open-source NOS like SONiC, along with a breakdown of the Total Cost of Ownership (TCO) for both proprietary and open-source solutions.

The Programmability Revolution: NETCONF and YANG

The limitations of SNMP for configuration management – combined with the brittleness of CLI-based automation scripts – created the conditions for something different. NETCONF, published by the IETF in 2006 and updated in 2011, arrived with a defining ambition: treat the network as a distributed database and give engineers transactional tools to match.

Transactional Integrity: The ACID Concept

NETCONF’s most important departure from its predecessors is transactional configuration. Changes are atomic – they either succeed completely or the device rolls back to its last stable state, preventing the “half-baked” configurations that plagued CLI scripting. A candidate datastore allows engineers to stage changes without touching the live network, validate them against a data model, and commit only when ready. This multi-phase mechanism fundamentally changes the risk profile of large-scale automation.

YANG: The Language That Makes It Real

NETCONF’s power is linked to YANG – Yet Another Next Generation – a data modeling language designed for the protocol. Where SNMP MIBs were unstructured and often vendor-specific, YANG models are hierarchical, strongly typed, and vendor-neutral. Engineers can write automation scripts that work consistently across multi-vendor environments, eliminating the single-vendor lock-in that made network automation brittle for so long.

The trade-off is real: NETCONF requires fluency in XML and YANG schemas, a steeper learning curve than SNMP. But the payoff – reduced configuration drift, fewer operational errors, and reliable large-scale automation – makes it the clear choice for orchestration.

Bridging to the Web: RESTCONF

As networking systems began integrating more tightly with cloud-native toolchains and DevOps pipelines, a protocol emerged that spoke the language of web developers. RESTCONF, published as RFC 8040 in 2017, provides a RESTful interface for accessing the same YANG-modeled data that NETCONF manages, but using familiar HTTP verbs – GET, POST, PUT, PATCH, DELETE.

RESTCONF operates over HTTPS and supports both XML and JSON serialization, making it a natural fit for teams whose tooling already revolves around web APIs. It is lighter and more stateless than NETCONF, ideal for lightweight web integration and stateless API calls to network software.

The trade-off is scope: RESTCONF typically operates only against the running configuration datastore and often lacks the multi-datastore transactional capabilities required for complex, bulk automation. For heavyweight orchestration, NETCONF remains the more appropriate tool. For quick, targeted API calls to a well-defined endpoint, RESTCONF earns its place.

Protocol Comparison: The Evolution at a Glance

The right protocol depends on your operational goal, environment maturity, and infrastructure scale. The table below maps the key dimensions:

| Metric | SNMPv3 | NETCONF | RESTCONF | gNMI |

|---|---|---|---|---|

| Protocol Age | Established (Legacy) | Mature (2006+) | Modern (2017) | Cutting-Edge |

| Data Structure | MIB (Unstructured) | YANG (Hierarchical) | YANG (Structured) | YANG (Structured) |

| Encoding | BER (Binary) | XML (Text) | XML or JSON | Protobuf (Binary) |

| Transport | UDP | SSH / TLS | HTTPS (TLS) | HTTP/2 over gRPC |

| Transactions | None | Atomic (Commit/Rollback) | Limited | Atomic |

| Data Collection | Polling (Pull) | RPC-based (Pull) | API-based (Pull) | Streaming (Push) |

| Resolution | Low (Minutes) | Medium (Seconds) | Medium | High (Milliseconds) |

The Modern Observability Powerhouse: gNMI and Streaming Telemetry

The most significant leap in protocol evolution belongs to gNMI – the gRPC Network Management Interface, a project of the OpenConfig community. Where SNMP polls and NETCONF pulls, gNMI pushes. Built on the gRPC framework operating over HTTP/2, it was designed from the ground up for the extreme scalability and real-time performance demands of hyperscale data centers and high-frequency AI GPU fabrics.

Why the Architecture Matters

The engineering choices behind gNMI compound into advantages. Protocol Buffers encoding is binary and compact, slashing the bandwidth consumed by management traffic compared to XML or JSON. HTTP/2 multiplexing allows multiple concurrent streams over a single TCP connection, eliminating the session-establishment overhead that becomes a bottleneck for NETCONF at scale. Strong typing reduces parsing errors and simplifies integration with automated analysis pipelines.

The Subscribe RPC: From Polling to Streaming

The core innovation is the Subscribe RPC. Rather than waiting for the management system to ask, devices are configured to stream high-fidelity telemetry the moment it is generated. STREAM mode pushes data at high-frequency intervals – every 10 seconds, every second, or faster. On-Change mode fires only when a monitored attribute actually changes, providing immediate alerting for link flaps or configuration drift without the constant overhead of polling.

This high-definition view of network health enables engineers to catch transient packet drops the moment they occur. For modern architectures where an AI training job’s completion time is directly tied to fabric performance, this is the baseline requirement.

SNMP Polling vs. gNMI Streaming: How microbursts become invisible under a 5-minute poll cycle, and how gNMI catches them the moment they occur

Why gNMI Is Mandatory for AI Fabrics: Sub-second streaming telemetry is the only mechanism capable of detecting the microbursts, buffer congestion, and RoCE credit pauses that silently degrade GPU-to-GPU communication and inflate job completion time.

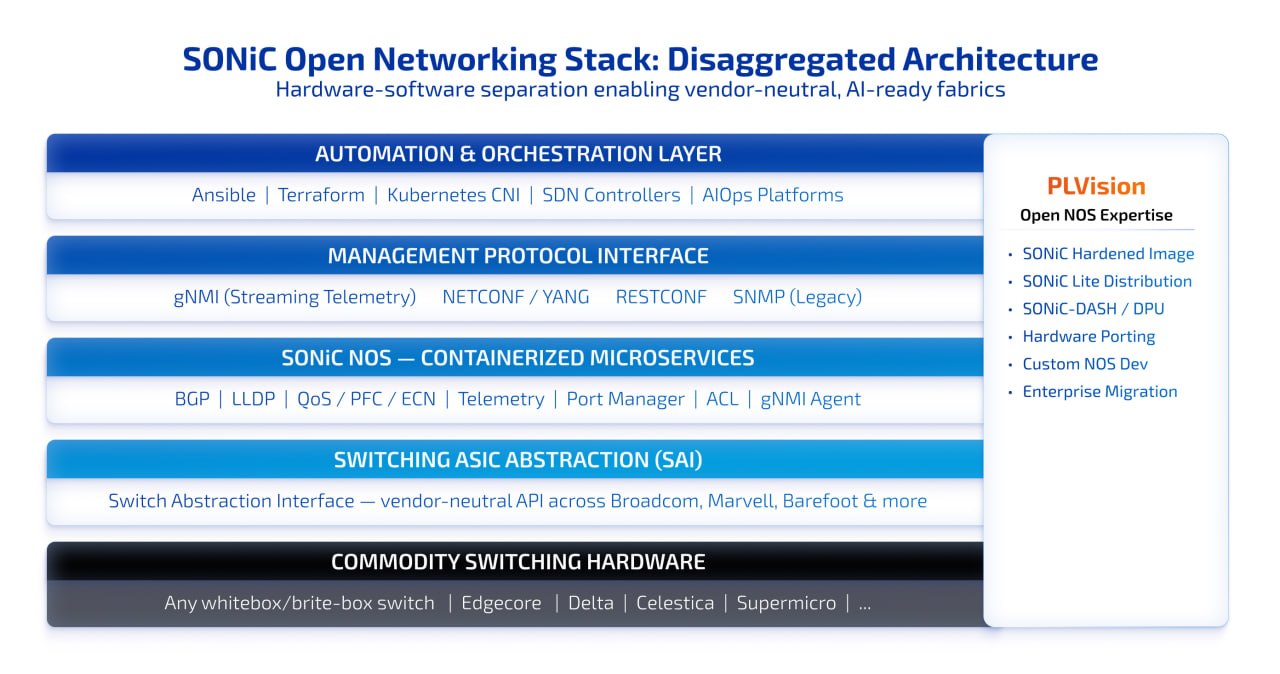

Open Networking and SONiC: The Platform That Ties It Together

The evolution of protocols is occurring in parallel with a structural shift in how networks are built: the disaggregation of the network stack. By separating the Network Operating System from the underlying hardware, disaggregation opens the door to vendor-neutral fabrics, commodity hardware economics, and the ability to choose best-of-breed components without being locked into a single ecosystem.

SONiC – Software for Open Networking in the Cloud – has emerged as the leading open-source NOS for this new world. Built on Linux, SONiC implements management protocols including gNMI and NETCONF as containerized microservices, providing a unified, model-driven interface for SDN controllers and automation platforms.

SONiC Disaggregated Architecture: Five layers from commodity hardware to orchestration, with PLVision’s open NOS expertise mapped to each

What Makes SONiC Ready for AI Workloads

SONiC’s fit for AI fabrics is practical and measurable. It supports RoCE (RDMA over Converged Ethernet) for efficient GPU-to-GPU data movement, reducing communication bottlenecks during distributed training. Its QoS and congestion control capabilities – Priority Flow Control, ECN, Enhanced Transmission Selection – allow operators to prioritize critical GPU traffic and minimize packet loss under heavy incast conditions. Advanced telemetry via gRPC/gNMI provides the high-frequency streaming that modern AIOps platforms depend on.

Download our white paper to learn more about SONiC's capabilities and unlock its potential for your business. Discover how SONiC can revolutionize your network infrastructure, offering unparalleled flexibility, scalability, and cost optimization.

P4 programmability through stacks like PINS enables centralized traffic engineering and in-network compute offloads, including switch-assisted collective operations like All-Reduce that reduce host CPU overhead in large AI clusters.

That said, Community SONiC is powerful but raw. Deploying it safely in mission-critical AI workloads requires hardening, rigorous QA, and lifecycle management – the gap between a promising open-source build and a production-grade distribution.

Partnering for Production: Where PLVision Fills the Gap

Understanding the protocol landscape is one thing. Building infrastructure that operates at the intersection of gNMI streaming telemetry, SONiC’s open NOS architecture, and the relentless performance demands of AI workloads is another challenge entirely.

PLVision blends deep switch-software engineering with active community leadership: we build hardened SONiC images, port to networking hardware, and deliver product-grade distributions. In plain terms: we take open networking from prototype to predictable production, while keeping your stack vendor-neutral and future-ready:

- Custom product development based on open-source technologies: SONiC-based product development services for Telco use cases, and SwitchDev development – delivering purpose-built network products without reinventing the foundation.

- Open NOS enablement and customization for hardware: encompassing SONiC-DASH API implementation, porting Community SONiC to your hardware, offloading xPU/SmartNIC customization and support.

- Network infrastructure transition to open NOS: SONiC adoption for enterprise networks and generating Community SONiC hardened images for data centers – reducing risk at every step of the migration.

- SONiC Lite, PLVision’s enterprise distribution of Community SONiC, designed for cost-effective management and access platforms – ideal for data center, campus, and edge deployments.

By owning your SONiC distribution, you gain full control, eliminate vendor lock-in and licensing fees, and align your infrastructure with AI’s demands.

Ready to move from proof-of-concept to production? Working with experienced open networking partners shortens the path dramatically – and keeps the door open for whatever the next generation of protocols and hardware brings.

Choosing the Right SONiC Version for Your Network Infrastructure

A Practical Protocol Strategy for Modern Teams

In most real-world environments, a hybrid strategy is the most sensible approach, deploying each protocol where its architecture is the best fit:

- SNMPv3 – Retain for baseline monitoring of legacy devices, printers, UPS systems, and any infrastructure where polling latency is not a critical constraint.

- NETCONF – The preferred choice for reliable, large-scale configuration and orchestration, particularly when integrating with tools like Ansible or Terraform.

- RESTCONF – Deploy for lightweight web integration and stateless API calls to network software where a simpler HTTP interface is sufficient.

- gNMI – Mandatory for high-frequency telemetry, real-time NOC dashboards, event-driven automation pipelines, and any environment running AI workloads.

The key insight is that these protocols are complementary. The teams that thrive are those that understand where each sits in the stack and build automation that leverages all of them.

Final Thought: Design for the Protocol, Not the Snapshot

The evolution from CLI commands to streaming gNMI telemetry is a fundamental reimagining of what a network is. We have moved from a static collection of individually managed devices to a dynamic, programmable fabric that functions as a critical engine for business innovation.

The protocols that define this new era – NETCONF’s transactional integrity, RESTCONF’s web-native accessibility, gNMI’s real-time streaming – are foundations. The next wave, already emerging, brings AI-driven operations that plan multi-step investigations autonomously, networks that self-heal before a human sees an alert, and open-source ecosystems that make hyperscale-grade capabilities available to teams of any size.

By embracing open standard protocols and the disaggregated architectures they enable, organizations can build networks that are not only more resilient and cost-effective today, but capable of participating in the AI-driven transformation that is already underway.